YouTube is using Artificial Intelligence and machine learning to replace the background without even using the green screen. The new feature must not come as a surprise as Google previously stated that they are working on a video-editing technology, which would not require common tools such as a green backdrop.

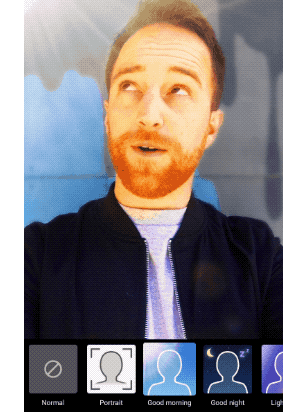

The new technology – dubbed as Mobile Real-time Video Segmentation – is used to replace the background of the video in real-time. As of now, YouTube is running a beta version of the technology with only a handful of the content creators.

Google stated that the testing had been kept limited to a small group, but added that with the improvement and expansion of the segmentation technology, the tech would be integrated into Google’s broader Augmented Reality services. Google’s Pixel 2 already comes with an AI-powered portrait mode for photos, paving the way for Google to implement the technology for mobile video capture, notes SlashGear.

Google has not come up with any specific timeline about the arrival of the new feature on a wide scale. The Mobile Real-time Video Segmentation, or the background replacement tool is available in YouTube’s new “stories” video format. Though the feature is not perfect yet, it works well for a simple beta.

Just like any other AI-based imaging programs, Google kickstarted its ambitious program with people manually marking the background in more than 10,000 images. Once the images were collected, the AI was trained with the data set to separate the background and foreground. Initially, Google trained the AI on single images. Once the software covers the background on the first image, the program goes ahead with the same mask to predict the background in the next frame.

If the second frame has a few adjustments to make from the first, such as a slight movement from the person talking on camera, the program makes adjustments to the mask. And, if the next frame is significantly different from the last, for instance, if someone else joins the video, the software will ditch that mask and create a new one.

In its research blog, Google talked about the technical details of the feature at length. “Our new segmentation technology allows creators to replace and modify the background, effortlessly increasing videos’ production value without specialized equipment,” read the blog post.

Google has also trained Artificial Intelligence to pick up the difference between facial features such as the eyes, hair, glasses, and mouth from everything else in the background and foreground as well. The search engine giant reveals that the solution is simple and lightweight with a capacity to run “10-30 times faster than existing photo segmentation models.”

Separating the background is an impressive feature in itself, but Google took it forward, by making a program to run on limited hardware on a smartphone rather than a desktop computer. To achieve the objective, Google programmers made several adjustments such as segmentation, downsampling and trimming down the number of channels to improve the speed. Thereafter, the team worked to improve the quality by adding layers to smooth the edges between the foreground and background.

According to Google, these improvements help the tool to replace the background in real-time, making the changes at over 100 fps on an iPhone 7 and over 40fps on the Google Pixel 2. Google claims the training set has an accuracy of 94.8%, notes Digital Trends. Google states that the Real-time Video Segmentation tool deploys various optimization techniques to bring down the amount of data needed to differentiate from the background in a video.

Google’s new tech, however, does not mean that the end of the green screen is anyway near. The green screen is still significant to filmmaking. Google’s new technology looks promising, but is not without some limitations (for now). In some cases, edges are filled with ugly halos along with other anomalies, notes TechTimes. Further, Google has yet to provide details on how it’s new Artificial Intelligence tool impacts the battery or if it requires a depth-sensing camera. Also, all the shared videos from Google had people in them, so it is not known if the tech would work on objects as well.

The Real-time Video Segmentation tool is another new feature for YouTube, which recently added a dark theme to the platform. The video streaming service also expanded its “Offline YouTube” for more countries. The offline YouTube feature is now available in 135 countries.