MIT (Massachusetts Institute of Technology) puts a lot of effort in working with artificial intelligence (AI). New MIT technology introduced earlier this year can see people move behind walls, while the students of the institute taught AI how to cook (although the results were rather funny). The most recent research published on MIT News focuses on using artificial intelligence deep learning to reveal objects in the dark.

According to George Barbasthathis, a professor of mechanical engineering at MIT who participated in the research, this new approach to artificial intelligence could be greatly applied in medicine.

“In the lab, if you blast biological cells with light you burn them, and there is nothing left to image,” he said in a news release. “When it comes to X-ray imaging, if you expose a patient to X-rays, you increase the danger they may get cancer. What we’re doing here is — you can get the same image quality but with a lower exposure to the patient. And in biology, you can reduce the damage to biological specimens when you want to sample them.”

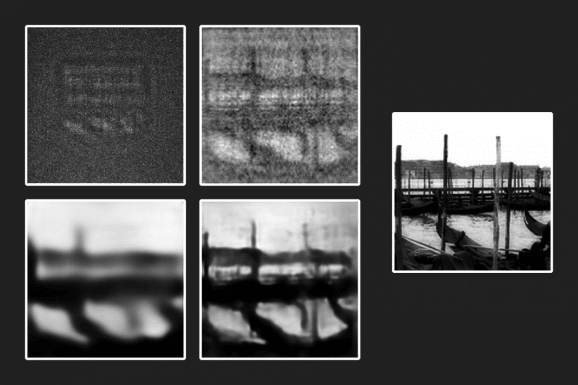

They used the grainy images of the pattern, making sure the photos were taken in poorly-lit conditions, around one photon per pixel, which is significantly less light than a normal camera could see in a dark room.

They taught the algorithm to recognize those ripples in the details, which made scientists conclude that this technique could be used in medicine. Artificial intelligence could be used to detect small parts of the body like biological tissues and cells, as well as look through imagery taken in poor light conditions.

“Invisible objects can be revealed in different ways, but it usually requires you to use ample light,” Barbastathis said. “What we’re doing now is visualizing the invisible objects, in the dark. So it’s like two difficulties combined. And yet we can still do the same amount of revelation.”

The deep-learning neural networks can be used to compute large quantities of data faster than a team of scientists could, as long as it’s “fed” with proper data. If its taught with the right set of methods and images, it could recognize and classify completely new images. Neural networks have a great application in computer vision and image recognition, meaning it could be vastly beneficial to science. Barbasthathis and his team also developed algorithms which could rebuild transparent objects of images which were taken in the opposite conditions, ones with plenty of light.

The team repeated the results using a new set of data, which consisted of more than 10,000 objects. However, the team generalized and varied different objects to teach AI more. The images included people, places and animals. Finally, after the “feeding” finished, the team introduced artificial intelligence to a new image.

They let it reconstruct the image of the objects in the dark on its own, although the results in which the algorithm used “physics-informed reconstruction” which applies the laws of physics of light, were more accurate images to the original, as opposed to the approach which doesn’t use the information of the “law of light.”

“We have shown that deep learning can reveal invisible objects in the dark,” Alexandre Goy, a lead author on the paper, published in the Physical Review Letters said in a news release. “This result is of practical importance for medical imaging to lower the exposure of the patient to harmful radiation, and for astronomical imaging.”