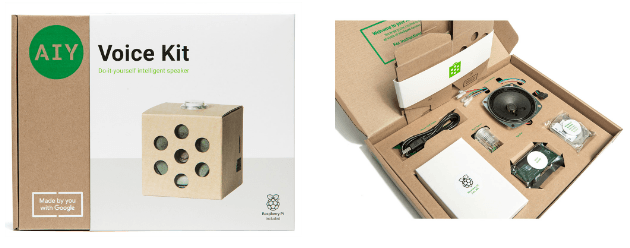

Google has now updated its AIY Vision kit and AIY Voice kit with features that will make them more meaningful for newcomers. With the earlier version, it was a bit complicated, as users had to provide their own Raspberry Pi and other needed hardware, but now everything that’s needed comes in the package.

“We’re taking the first of many steps to help educators integrate AIY into STEM lesson plans and help prepare students for the challenges of the future by launching a new version of our AIY kits,” Google said in a blog post.

Google’s updated AIY Vision and AIY Voice kit come with a Raspberry Pi Zero WH board and a pre-provisioned SD card. The Vision Kit even includes a Raspberry Pi Camera v2. The search giant believes the new kit will speed up the setup process as users will not have to go shopping for extra needed items.

Google AIY Projects (it’s like DIY, but with artificial intelligence, so it’s AIY), which were launched in 2017, are simple hardware kits that help users make AI-powered devices like an Assistant speaker and a camera with added capabilities like image recognition. Google AIY Projects are very useful in schools and for STEM projects.

According to the search giant, since the launch of the kits, it has witnessed robust demand, mainly from the STEM audience “where parents and teachers alike have found the products to be great tools for the classroom.”

Along with the updated Vision and Voice kits, Google has also come up with an AIY companion app for Android. The new app, whose iOS and Chrome versions are also in the works, will help in the setup and configuration. The kits will still work with a monitor, keyboard and mouse. It must be noted that the app can only be used after the initial setup is done.

The search giant has also updated its AIY website to provide clearer instructions for young developers. The revamped website also “includes a new AIY Models area, showcasing a collection of neural networks designed to work with AIY kits,” the search giant says.

The Google AIY Vision and Voice kits, priced at $90 and $50, respectively, are now available through Target’s online and retail stores. These kits will also be available via other stores globally. Though the new kits cost more, they are worth the price, as they come with all the needed items to do it yourself (DIY).

These Google AIY Projects are a nice initiative from the search giant to push communities to improve their STEM programs in schools. The Google AIY Voice Kit helps users develop a natural language recognizer by connecting to Google Assistant. Like the preceding VR viewers, the Voice device will be made from cardboard. On the other hand, the AIY Vision Kit uses TensorFlow’s machine learning models to develop an image recognition device, which can identify objects.

Apart from the Google AIY Projects, the tech giant is also working on other projects to make machine learning more accessible. The company recently launched a browser-based version of TensorFlow called TensorFlow.js. TensorFlow, which is Google’s machine learning framework, will help users do more immersive experiments with their AIY Vision Kit and AIY Voice Kit. Users are already able to download pre-trained models for the AIY Vision Kit from the Google AIY Projects site.

With all these initiatives and tools, Google will be hoping that developers will find more uses for smart speakers and smart cameras. When announcing the updated kits, the search giant also expressed interest in teaching computer science skills to students.

Meanwhile, Google continues to experiment with artificial intelligence (AI). The search giant recently rolled out Semantic Experiences, which are websites that demonstrate AI’s ability to understand how we speak. The two publicly-available experiments are called Talk to Books, and they allow users to talk to a machine learning-trained algorithm. This algorithm answers questions using relevant passages from the already-available text.

With Talk to Books, users will have to type a statement or question, and the AI then provides sentences from books that best relate to the typed content. According to the company, the algorithm does not use keyword matching; rather, it has been taught to identify an apt response by feeding it a “billion conversation-like pairs of sentences.”